CPUs and Debian Package Building

Introduction

I have just bought a HP Z4 G4 with W-2125 CPU for $320 and I decided it was a good time to do some benchmarks on Debian package building to see which system I should use for that.

The W-2125 CPU scores only 9,954 on the passmark multithread test but scores 2,546 on single thread [1]. Passmark seems to have some limitations as the only DDR3 system that’s important to me at the moment (the HP Z420 workstation my parents use which cost me $750 in 2021) with a E5-2620 CPU scoring 5,325 for multithread and 1,113 for single thread [2]. From the passmark results one would expect that the system is slightly more than twice as fast as the Z420 for operations that involve less than 4 CPU cores.

For the initial tests of the Z4 G4 I ran them with hyper-threading enabled as 4 cores isn’t much by today’s standards and also the machine in question is going to be less exposed to hostile data and contain less secret data than most of my systems so the security risks of hyper-threading are less of a concern.

I did some tests with a couple of tasks that are very important to me, building SE Linux policy packages (something I may do a dozen times in a day) and building Warzone 2100 (which I do less often but is the most intensive build process I regularly run). At the bottom of this post there are tables with the results from building these packages on my Z640 workstation with a E5-2696 v4 CPU [3], the Z420, and the new machine.

For the Warzone 2100 package I tested building on my Z840 dual CPU system [4]. I didn’t test building the SE Linux policy on the Z840 this time because that package can’t take advantage of even 22 cores. When I initially got the Z840 running it built the policy packages faster because the Z640 had an older CPU that was slower for single core operations than the CPUs in the Z840.

BTRFS Compression

For some time I have noticed significant differences in compile time on my workstation, a factor of more than 2. I did more tests and noticed that “top” showed something like the following, those kernel threads are all BTRFS related, except for “gfx” which is probably something graphical caused by running Chrome with about 300 tabs open.

2144316 root 20 0 0 0 0 I 26.6 0.0 0:36.76 kworker/u88:20-btrfs-endio-write 2221470 root 20 0 0 0 0 I 23.7 0.0 0:01.85 kworker/u88:12-gfx 2221436 root 20 0 0 0 0 I 15.1 0.0 0:07.48 kworker/u88:8-btrfs-compressed-write 2166191 root 20 0 0 0 0 I 12.8 0.0 0:15.80 kworker/u88:23-btrfs-compressed-write 2126387 root 20 0 0 0 0 I 10.2 0.0 1:29.11 kworker/u88:4-events_unbound

I had been running BTRFS with the mount option “compress=zstd:15” which caused much of the performance problems when building. It was also a random performance issue which I think happened due to the BTRFS 30 second write-back sometimes taking more than 30 seconds during the build process which then caused a second write-back.

I did tests on ZSTD compression levels 5, 8, 10, and 15. 15 was never good and often really bad. 10 was not unbearable but consistently slower. 8 was sometimes as fast as 5 and sometimes quite a bit slower. I didn’t test levels below 5 because I need to have some compression and it seemed that the benefits of reducing compression were dropping off below 8.

I found that the BTRFS compression delay is not counted in system time for the process. I think it’s the fsync() system calls in the semodule and dpkg-deb programs that cause the delays related to BTRFS compression waiting for kernel threads.

BOINC

I have all my systems other than laptops running BOINC in the background so that CPU power is used for scientific research when I don’t have any personal use for it [5]. I believe that it’s immoral to waste CPU power when it could be used for research.

In the below table which has test results from building the package with and without BOINC, and with different ZSTD compression levels in BTRFS all the worst entries were from when BOINC was running apart from one where ZSTD level 15 compression was used. The really poor performance with ZSTD level 15 was an outlier, but it wasn’t an uncommon outlier so I left it in.

Running BOINC in the background configured to use all CPU cores caused a significant increase in “user CPU time” (the time a CPU core spent actually running the program). My initial thought was that it’s partly related to “turbo boost”.

The Intel ARK page for the CPU in the Z420 shows that it’s main clock speed is 2.0GHz with a 2.5GHz “turbo boost” [6]. The “turbo boost” is apparently largely based on temperature and apparently limited to one core, so if the other CPU cores are all being used then the CPU will probably be too hot to have the turbo boost and if it happens it might not happen for my compile processes.

The ARK page for the E5-2699 v4 (which is a similar CPU to the E5-2696 v4 that I’m using but is officially documented by Intel) [7] shows that it has a base clock speed of 2.2GHz and a turbo boost speed of 3.6 GHz. 322 vs 244 seconds of user CPU time means running 32% slower which can plausibly be explained by the lack of a 64% turbo boost with a bit of help from the 55MB L3 cache being thrashed.

Turbo boost would only be a noticeable issue for building packages like the SE Linux policy packages which doesn’t take much advantage of multi-core CPUs. For a build process to average at best 362% CPU use there has to be large parts of the process that are limited to one or two cores which can potentially give a benefit from turbo-boost.

When building the Warzone 2100 packages most of the build time is running basis-universal which is a multi-threaded program to compress GPU texture data. This usually causes a load average of 300+ on the Z640 or 600+ on the Z840. But the build time is still increased by more than 50% on both the Z640 and the Z840 when BOINC is running in the background, which seems to be an indication that it’s not related to turbo boost. I verified that BOINC is running at IDLE schedule priority with the following command:

# chrt -p $(pidof -s einstein_O4MD_2.01_x86_64-pc-linux-gnu) pid 2974874's current scheduling policy: SCHED_IDLE pid 2974874's current scheduling priority: 0

In theory this means that BOINC won’t affect foreground processes.

Hyper Threading on the W-2125

The best claims I’ve seen about HT are 15% to 30% performance boost. The best I’ve actually seen in the past is about 18%. Seeing a 10% benefit for building Warzone 2100 is at the low end of the range I expected. 8 virtual cores is not many for a build process that causes a load average of 600+ when running on a system with 44 real cores.

I was surprised to see a 6% performance benefit in hyper-threading for building the SE Linux policy as I didn’t think there was enough use of threading or multiple processes to allow that.

Many build scripts use a number of processes that match the number of apparent CPU cores. While “make -j 88” might give a theoretical performance benefit on a 44 core system it will also take a lot of RAM and any paging will outweigh the benefits of hyper-threading. On a system with only 4 real cores there’s less potential for using too much RAM and as security isn’t so important on that system I will leave it on.

Comparing the CPUs

The best results of the Z640 and Z4G4 are only 50% faster than the best results of the Z420.

The Z420 has a E5-2620 CPU which is far from the fastest CPU available for that system – the E5-2687W has 8 cores and rates 10,021/1,669 on passmark [8] which is far better than the 5,331/1,114 the E5-2620. The E5-2687W is the fastest CPU that HP lists as supported by the Z420 and it supports DDR3-1666 RAM as opposed to the DDR3-1333 that is the fastest that the E5-2620 supports. With suitable hardware upgrades the Z420 would probably only take about 20% longer to do builds of the SE Linux policy and other packages that can’t take advantage of more than 8 CPU cores.

The Z4G4 system has 4 RAM channels which means that you should get some performance benefits from having 4 DIMMs, my system currently has 2 and I haven’t yet managed to get more DDR4-2666 DIMMs. But I’d still expected a W-2125 CPU with 2*DDR4-2666 DIMMs outperform any E5-26xx CPU with 4*DDR4-DDR-2400 DIMMs for tasks that average less than 4 CPU cores.

In retrospect I would have been better off getting a HP Z820 (two socket server with DDR3 RAM) than the first DDR4 systems I got. It seems that for reasonable size builds a two socket system comes close to twice the speed of a single socket system. I did briefly own a HP ML350 two CPU system with DDR3 RAM but it was too noisy for my intended use as a deskside workstation so I sold it.

Things to Investigate

I plan to do more investigation on BTRFS compression, how to get the best compression without excessive delays and how to recognise when delays are happening. I have some SSDs that have sustained write speeds as low as 15MB/s (Crucial P1 series) so for those I could probably have very high compression levels without slowing the system down.

The fact that BIONC slows things down so much seems to be a bug. When processes are running with the IDLE scheduling class there shouldn’t be such significant delays. Is it due to cache thrashing? How can I best get BOINC suitably throttled when I’m sitting at my workstation, I don’t want BOINC connecting to the local X server (which it repeatedly tries to do). Do I need to tune my kernel for better handling of IDLE scheduling?

When I get more DIMMs in the Z4G4 I need to do more tests to see if it gives an overall performance boost.

Also the Z4G4 system has a BIOS option for “sub NUMA” which basically means treating the different RAM channels on a single CPU as NUMA zones, I enabled that option which does nothing presumably because I only have 2 DIMMs, the results when I have 4 DIMMs will be interesting. I will also do some NUMA tests on the Z840 to see what benefits it gives.

I have a selection of RAM speeds that will work in the Z4G4, if I have enough spare time I’ll test what difference that makes for CPU bound tasks that matter to me.

For package building fsync() is not helpful, if the system crashes before it’s done then I will just do the build again. For a build cluster it is probably a good feature and probably doesn’t affect aggregate performance when multiple packages are built at the same time, but for the single user case probably not. I will investigate libeatmydata for package building [9].

Conclusion

The progress in CPUs seems to have slowed down a lot recently. The main benefits seem to be in more CPU cores and for newer sockets with more RAM channels.

The CPUs that do have improvements in single core performance are the i9 series (which mostly doesn’t come with motherboards supporting ECC) and AMD CPUs (which is rare in enterprise class hardware). Maybe I should get a server with an i9 or AMD CPU for tasks that need a fast turn around with a small number of cores. That would probably outperform any CPU designed for large core counts for things like building the policy and setting up test VMs (which depends on package installation speed that is single core bottlenecked).

The W-21xx CPUs seem to offer little benefit over the E5-26xxv4 CPUs and not a lot of benefit over E5-26xx CPUs (with DDR3). Even the W-22xx CPUs look like they aren’t going to offer a lot as they are only an incremental improvement over the W-21xx series. I had considered making the Z4G4 my main desktop workstation after the high end W CPUs become affordable, but it looks like that won’t be worth it until such CPUs drop from the current ebay price of $900 to $100.

I think I’ll keep waiting for a decent socket LGA3647 or DDR5 based server [10] for my next significant upgrade.

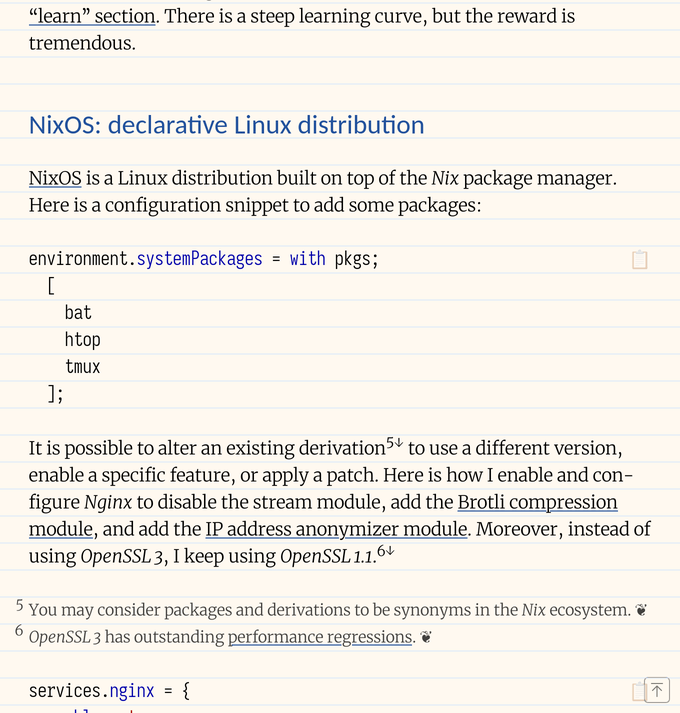

Tables

Building SE Linux Refpolicy

| System | BOINC | Compression | CPU Time | Elapsed | CPU% |

|---|---|---|---|---|---|

| Z640 | no | 8 | 248.82user 55.58system | 1:23.88elapsed | 362%CPU |

| Z4G4 | no | 5 | 245.15user 34.63system | 1:24.93elapsed | 329%CPU |

| Z640 | no | 5 | 244.75user 34.87system | 1:25.98elapsed | 325%CPU |

| Z4G4 | no | 10 | 245.21user 35.64system | 1:29.63elapsed | 313%CPU |

| Z640 | no | 8 | 248.71user 55.90system | 1:33.01elapsed | 327%CPU |

| Z640 | no | 10 | 250.90user 55.78system | 1:42.12elapsed | 300%CPU |

| Z640 | yes | 8 | 298.19user 69.30system | 1:59.77elapsed | 306%CPU |

| Z640 | yes | 10 | 300.58user 68.90system | 2:01.53elapsed | 304%CPU |

| Z420 | no | 5 | 359.01user 44.95system | 2:07.33elapsed | 317%CPU |

| Z640 | yes | 5 | 322.40user 71.82system | 2:34.66elapsed | 254%CPU |

| Z420 | yes | 5 | 372.03user 42.95system | 2:42.15elapsed | 255%CPU |

| Z640 | yes | 15 | 299.26user 67.18system | 2:59.77elapsed | 203%CPU |

| Z640 | no | 15 | 250.05user 54.60system | 3:07.61elapsed | 162%CPU |

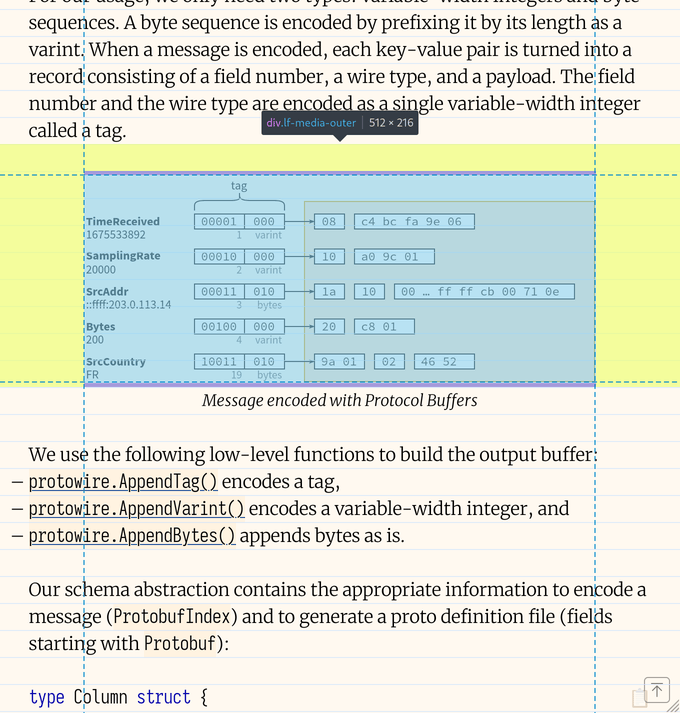

Building Warzone 2100

| System | BOINC | Compression | CPU Time | Elapsed | CPU% |

|---|---|---|---|---|---|

| Z840 | no | 10 | 6549.21user 89.46system | 4:18.90elapsed | 2564%CPU |

| Z840 | no | 5 | 6533.81user 90.50system | 4:19.24elapsed | 2555%CPU |

| Z640 | no | 5 | 7040.87user 183.12system | 7:13.50elapsed | 1666%CPU |

| Z840 | yes | 5 | 8039.52user 169.62system | 8:02.86elapsed | 1700%CPU |

| Z640 | yes | 5 | 7486.44user 205.03system | 11:09.97elapsed | 1148%CPU |

| Z4G4 | no | 5 | 7891.32user 74.45system | 17:48.03elapsed | 745%CPU |

| Z4G4 | no | 10 | 7942.10user 77.43system | 17:58.72elapsed | 743%CPU |

Hyper-Threading

| Build | HT | Compression | CPU Time | Elapsed | CPU% |

|---|---|---|---|---|---|

| Warzone | yes | 5 | 7891.32user 74.45system | 17:48.03elapsed | 745%CPU |

| Warzone | yes | 10 | 7942.10user 77.43system | 17:58.72elapsed | 743%CPU |

| Warzone | no | 5 | 4492.45user 59.09system | 19:59.01elapsed | 379%CPU |

| Warzone | no | 10 | 4497.28user 59.46system | 20:07.15elapsed | 377%CPU |

| Refpolicy | yes | 5 | 245.15user 34.63system | 1:24.93elapsed | 329%CPU |

| Refpolicy | yes | 10 | 245.21user 35.64system | 1:29.63elapsed | 313%CPU |

| Refpolicy | no | 5 | 180.84user 29.74system | 1:32.30elapsed | 228%CPU |

| Refpolicy | no | 10 | 180.29user 30.07system | 1:35.01elapsed | 221%CPU |

- [1] https://tinyurl.com/2ddf7t5y

- [2] https://tinyurl.com/kgmagfs

- [3] https://etbe.coker.com.au/2026/04/10/hp-z640-e5-2696-v4/

- [4] https://etbe.coker.com.au/2025/04/05/hp-z840/

- [5] https://boinc.berkeley.edu/

- [6] https://tinyurl.com/2mopjxgc

- [7] https://tinyurl.com/2r3j4bzg

- [8] https://tinyurl.com/reu2p84

- [9] https://www.flamingspork.com/projects/libeatmydata/

- [10] https://etbe.coker.com.au/2025/08/02/server-cpu-sockets/

05 June, 2026 07:31AM by etbe