I have been helping co-maintain the Debian curl package for a few

years now, and even though Samuel and Charles do most of the work, I'm

happy to jump in and help when needed. This is one of those cases.

Nowadays the package is maintained by 3 people (with help from others

occasionally), but it hasn't always been like this. Samuel adopted

the package back in 2021, and since then it has received a lot of love

and care to make sure it lives up to Debian's standards. Again, kudos

to both him and Charles who have been doing great work on this front.

But a little more than 20 years ago, the situation in Debian (and

curl!) was "a bit" different.

Once upon a time...

According to d/changelog, the Debian curl maintainer in 2005

introduced changes to the packaging that allowed it to generate a

version of libcurl for each TLS backend available: OpenSSL and

GnuTLS. This meant that curl would have two binary library packages:

libcurl3-openssl and its respective -dev variant, for libcurl

linked against OpenSSL; andlibcurl3-gnutls and its respective -dev variant, for libcurl

linked against GnuTLS.

But then, around 2006/2007 or so, upstream curl decided to bump the

SONAME version of libcurl from 3 to 4. At the time, they apparently

did not version their library symbols like they do now, which

was... less than ideal. I don't judge them: curl and a lot of other

important projects have come a long way when we consider best

practices to write shared libraries.

Meanwhile, on Debian land, the release team was having trouble with

other transitions going on at the time. For those who are not versed

in Debian's vocabulary, a transition happens when a shared library

gets its SONAME version bumped: when this happens, we have to make

sure that all reverse dependencies of that library still build with

the new version, and fix things that fail. The more reverse

dependencies the library has, the harder this work gets.

When upstream curl bumping the SONAME version of libcurl, the Debian

curl maintainer at the time correctly renamed the binary packages from

libcurl3-{openssl,gnutls} (and their -dev variants) to

libcurl4-{openssl,gnutls} (and their -dev variants), which

obviously triggered a transition. And a big one, because libcurl is

used by several projects.

Long story short, the Debian release team found themselves between a

rock and a hard place. According to the late Steve Langasek at the

time:

We talked a while back about the curl transition, and about how upstream's

change from libcurl.so.3 to libcurl.so.4 is gratuitously painful for us in

light of the large number of reverse dependencies.

The libcurl transition has at this point gotten tangled with soname

transitions in jasper, exiv2, kexiv2, and God only knows what else. So I'd

like to revisit this question, because tracking this transition is costing

the release team a lot of time that would be better spent elsewhere, and

removing the need for a libcurl transition promises to reduce the complexity

of the other components by an order of magnitude.

On looking at the curl package, I've come to understand that the

symbol versioning in place in this library is the result of a

Debian-local patch. That's great news, because it suggests a solution

to this quandary that doesn't require an unreasonable amount of

developer time.

Yeah, it wasn't pretty. Here's what was proposed:

I am proposing the following:

- Keep the library soname the same as it currently is upstream. Because

upstream uses unversioned symbols, our package will be binary-compatible

with applications built against the upstream libcurl regardless of what we

do with symbol versioning, so leaving the soname alone minimizes the

amount of patching to be done against upstream code here.

- Revert the Debian symbol versioning to the libcurl3 version, and make

libcurl.so.3 a symlink to libcurl.so.4. We have already established that

libcurl.so.4 is still API-compatible with libcurl.so.3, in spite of the

soname change upstream; reverting the symbol versioning will make it fully

ABI-compatible with libcurl.so.3, and adding the symlink lets

previously-built binaries find it.

- Revert the Debian package names to the curl 7.15.5 versions. Because

compatibility has been restored with libcurl3 and libcurl3-gnutls,

restoring the package names provides the best upgrade path from etch to

lenny; and because the symbol versions have been reverted, the libraries

are not binary-compatible with the Debian packages currently named

libcurl4/libcurl4-gnutls/libcurl4-openssl (in spite of being

binary-compatible with upstream), so it would be wrong to keep the current

names regardless.

- Drop the SSL-less variant of the library, which was not present in curl

7.15.5; AFAICS, there is no use case where a user of curl needs to not

have SSL support, so this split seems to be unnecessary overhead. Please

correct me if I'm mistaken.

- Leave the -dev package names alone otherwise, to simplify binNMUing of the

reverse-dependencies (some packages have already added versioned

build-deps on libcurl4.*-dev -- I have no idea why -- so reverting the

names would mean more work to chase down those packages). Drop

libcurl4-dev as a binary package, though, in favor of being Provided by

libcurl4-gnutls-dev. Many of the packages currently build-depending on

libcurl4-dev -- including some that wrongly used libcurl3-dev before --

are GPL, and these are apparently all packages where having SSL support

missing in libcurl4 wasn't hurting them, so libcurl4-gnutls-dev seems to

be the reasonable "default" here.

- Schedule binNMUs for all reverse-dependencies.

Again, no judgement here: this was what needed to be done at the time,

and I believe it was a good solution given the circumstances.

In the end, the binary library packages got renamed again: from

libcurl4-{openssl,gnutls} back to libcurl3-{openssl,gnutls} (but

not their -dev variants!), but they continued shipping

libcurl libraries whose SONAME version was 4. This solved the

immediate problem of untangling the transitions mentioned by Steve,

but introduced a technical debt that would stick with the package

literally for decades.

The situation at the end of 2007 was:

libcurl3-openssl with libcurl4-openssl-dev; andlibcurl3-gnutls with libcurl4-gnutls-dev.

More discrepancy is added

Eventually the libcurl3-openssl package got renamed to libcurl3,

but aside from that the situation with mismatched library names

vs. SONAME versions stayed relatively unchanged until around 2018,

when the Debian curl maintainer at the time (a different person)

renamed libcurl3 to libcurl4 to fix a bug. This was the right

thing to do for libcurl3, and at the time upstream curl was already

properly versioning their symbols, but for some reason

libcurl3-gnutls got left behind. So now we had:

libcurl4 with libcurl4-dev; andlibcurl3-gnutls with libcurl4-gnutls-dev.

In other words, we now have a discrepancy between the OpenSSL and

GnuTLS variants' names. Yeah, confusing. And this is the situation

right now, on May 2026, while I write this post.

To make matters worse, the Debian curl package has been carrying a

patch to facilitate the split of OpenSSL and GnuTLS flavours for

decades now, and, for some reason I didn't bother to investigate, the

patch pins the SONAME version of libcurl3-gnutls to CURL_GNUTLS_3,

effectively overriding upstream's decision to version the symbols as

CURL_GNUTLS_4.

A call to make things right

Back in 2022, Simon McVittie filed a Debian bug to try and call our

attention to the fact that we were shipping this messy set of curl

packages. I had just started to get involved in the package

maintenance and Samuel asked me to take a look at the bug. I noticed

it was going to take more time than I had available, so I decided to

put it in my TODO list (TM).

Simon was generous enough to lay out a possible plan to tackle the

problem, but I had a feeling that this was going to be harder than it

looked. I kept postponing working on the bug, but also kept thinking

about it now and then because it's an interesting thing to solve.

Then, a month or so ago the Debian Brasil community got together for

MiniDebConf Campinas 2026 and we decided to do a bug squashing party

there. I started working on a few FTBFS bugs with GCC 16, but then

got remembered about the curl bug and thought that that was the

perfect time and place to start working on it, for a few reasons:

- Samuel and Charles were also attending the conference, so I could

talk to them about my plans and show them a PoC.

- I was going to give a presentation about symbols (in pt_BR), so I

could use this bug as an example of symbol versioning.

- I wanted to have fun.

The initial plan

The plan I had in mind was a variant of Simon's proposed plan:

- I would have to adjust our GnuTLS-specific patch so that it did not

override the SONAME version for

libcurl-gnutls. Then,

- For each symbol from

libcurl3-gnutls I would have to:

- Explicitly version it as

curl_symbol_name@@CURL_GNUTLS_4.

- Create an alias for the symbol (let's call it

__curl_compat_symbol_name).

- Explicitly version this alias as

__curl_compat_symbol_name@CURL_GNUTLS_3.

- Have a separate version of curl's linker script to make it

possible to create a hierarchy between

CURL_GNUTLS_3 and

CURL_GNUTLS_4 symbols.

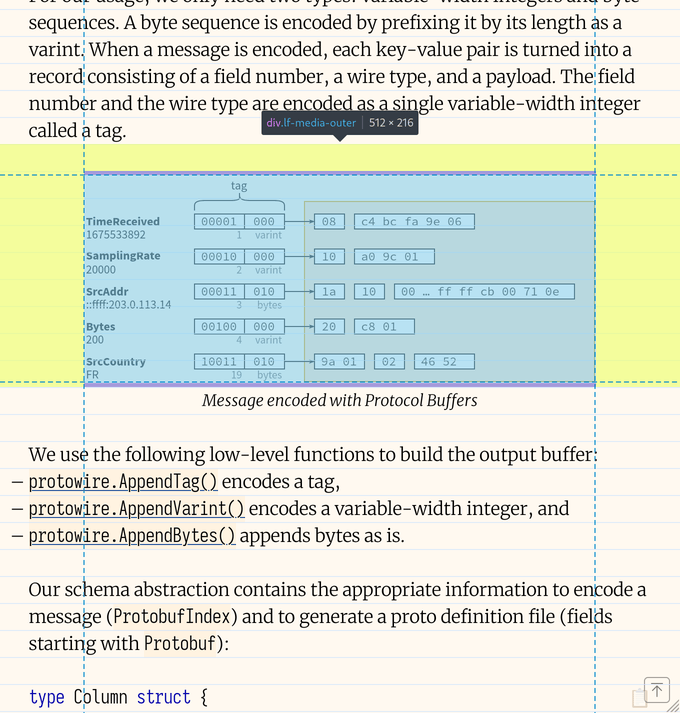

Note that this whole dance is needed because it is a hard requirement

that programs linked against libcurl3-gnutls keep working when we

ship libcurl4-gnutls, without needing to recompile them. Due to the

fact that we will not really bump the SONAME of libcurl-gnutls (but

instead fix the symbol versions shipped by it), we cannot expect

programs to break given that they are actually using the exact same

ABI as before.

Unfortunately (as it is common with low level tools) the documentation

for ld's versioning syntax is quite incomplete and hard to find.

One of the best sources I found was this blog post. For this reason,

let me quickly explain the different notations for symbol versioning

used above.

curl_symbol_name@@CURL_GNUTLS_4

When we use curl_symbol_name@@CURL_GNUTLS_4 (note the @@) we are

telling the linker that this should be considered the default

version of curl_symbol_name. In other words, when a binary that

links against libcurl-gnutls calls curl_symbol_name, the linker

should use curl_symbol_name@@CURL_GNUTLS_4 to resolve the symbol.

There are a few ways to specify a symbol version in C/C++:

__attribute__((__symver__("curl_symbol_name@@CURL_GNUTLS_4")))

void curl_symbol_name()

{

/* ... */

}

/* or... */

void curl_symbol_name()

{

/* ... */

}

__asm__(".symver curl_symbol_name, curl_symbol_name@@CURL_GNUTLS_4");

Function alias

Creating an alias for a function is basically saying that a function

can be called by another name. You can do that in C/C++ like:

void curl_symbol_name()

{

/* ... */

}

void __curl_compat_symbol_name()

__attribute__((alias("curl_symbol_name")));

__curl_compat_symbol_name@CURL_GNUTLS_3

Finally, when we use __curl_compat_symbol_name@CURL_GNUTL_3 (note

the single @) we are telling the linker that this symbol exists, but

it should not be used as the default symbol. In fact, this

notation will basically hide the symbol and make it only available for

those programs that have already been linked against it. It's a way

of saying "don't offer this symbol when linking, but it's here in case

a program needs it to run" (it's a bit more complicated than that, but

you get the point).

The reason I had to create an alias to the function before

versioning the symbol with @CURL_GNUTLS_3 is because, once I've

versioned the main symbol as @@CURL_GNUTLS_4, I can't create another

version of it. It's also important to mention that to be able to

create a version for the alias I also had to change its visibility to

default. In the end, the alias ended up being defined as:

extern void __curl_compat_symbol_name()

__attribute__((alias("curl_symbol_name"), visibility("default")));

First attempt and lessons learned

For my PoC I decided to tackle a small subset of the problem. The

symbols file for libcurl3-gnutls contains around 100 symbols that

need to be fixed, so I chose two of them and started trying to write a

patch to see if I could make things work. And after some time

struggling with GCC's syntax and inspecting nm -D's output I finally

got something that looked like it was going to work. The two symbols

I had chosen to work with got correctly versioned (both as

@@CURL_GNUTLS_4 and @CURL_GNUTLS_3), and a quick-and-dirty C

program that used those symbols correctly compiled and ran with the

expected symbols. I showed the results to Samuel and Charles, we got

excited about what we saw, and then the conference ended.

Second attempt and some adjustments

After getting back home I resumed the work on my branch and wrote an

Emacs function that semi-automatically adjusted all 100+ symbols

listed in the symbols file so that they all looked like:

__attribute__((__symver__("curl_symbol_name@@CURL_GNUTLS_4")))

void curl_symbol_name()

{

/* ... */

}

extern void __curl_compat_symbol_name()

__attribute__((alias("curl_symbol_name"), visibility("default"),

symver("__curl_compat_symbol_name@CURL_GNUTLS_3")));

The patch was big but mostly repetitive, and I was happy to have come

up with a solution that looked clean. Until I tried to build the

package, that is.

I started seeing some strange errors that happened when ld was

trying to link the final libcurl4-gnutls object (yes, at that point

I had already renamed the binary package). This is one of the errors

I was getting from ld (I got variants of this error as I was trying

to fix the approach):

/usr/bin/x86_64-linux-gnu-ld.bfd: .libs/libcurl_gnutls_la-easy.o: in function `dupeasy_meta_freeentry':

./debian/build-gnutls/lib/./debian/build-gnutls/lib/easy.c:1024: multiple definition of `curl_easy_cleanup'; .libs/libcurl_gnutls_la-easy.o:./debian/build-gnutls/lib/./debian/build-gnutls/lib/easy.c:908: first defined here

/usr/bin/x86_64-linux-gnu-ld.bfd: .libs/libcurl-gnutls.so.4.8.0: version node not found for symbol curl_easy_duphandle@CURL_GNUTLS3

/usr/bin/x86_64-linux-gnu-ld.bfd: failed to set dynamic section sizes: bad value

This was strange. I did some tests with very simple versions of a

shared library using the versioning mechanism I had implemented and it

all worked. I could not reproduce the problem, and that's not a great

feeling to have.

Then, after reading a lot of documentation and blog posts throughout

the internet I found something interesting. Apparently ld has a

limitation when it comes to dealing with symbols versioned with @@.

If there is a single symbol versioned like that in a source file (the

actual term is TU, which means Translation Unit, but let's

simplify), then ld is happy and generates the expected version

without issues. But when we're dealing with multiple definitions of

@@ symbols in a source file (which is exactly what happens in curl),

then ld can get confused and start giving errors during the link

stage.

To solve that limitation, we have to resort to yet another symbol

versioning notation: @@@. Yes, three at signs. For example:

void curl_symbol_name()

{

/* ... */

}

__asm__(".symver curl_symbol_name, curl_symbol_name@@@CURL_GNUTLS_4");

Note that we have to use __asm__ because GCC's __attribute__

doesn't support the triple-at notation.

What this does is tell the linker to create a versioned symbol for

curl_symbol_name, set it as the default symbol when linking, but

also remove the unversioned curl_symbol_name symbol. This makes

ld happy and allows it to successfully link libcurl-gnutls. As

usual, you won't find any mention of the @@@ notation inside ld's

documentation.

With libcurl-gnutls compiling again, I had to adjust libcurl's

linker script to create a hierarchy between CURL_GNUTLS_3 and

CURL_GNUTLS_4 symbols. Here's the final version of the file:

CURL_GNUTLS_3

{

global:

curl_easy_cleanup;

/* lots of other symbols here */

local: *;

};

CURL_GNUTLS_4

{

global: curl_*;

local: *;

} CURL_GNUTLS_3;

Debian package adjustments

After getting the hard part out of the way, the rest was easy. It was

time to finally rename libcurl3-gnutls to libcurl4-gnutls.

Initially I was thinking that I'd need to ask the release team for a

transition to happen, but as it turns out that won't be necessary.

Because we are effectively shipping the same exact library/ABI and the

only difference is the inclusion of the extra CURL_GNUTLS_4

versioned symbols, and given that we will be shipping CURL_GNUTLS_3

versioned symbols to guarantee backwards compatibility, packages won't

need to get rebuild just to pick up the new dependency. Instead, we

can safely turn libcurl3-gnutls into a transitional package that

depends on libcurl4-gnutls.

Merge request and next steps

This is the merge request where I am working on the fix. As of this

writing it is in a draft state, but I expect to merge in the next

couple of days. Once the fixed curl package is uploaded, we should

keep an eye on the archive to make sure no unexpected bugs happen.

I would like to carry this patch downstream at least until forky is

released. It doesn't make sense to propose it upstream because this

problem is Debian-specific and should be fixed there. We will need to

make sure that all reverse dependencies of libcurl3-gnutls are

recompiled before we can get rid of the transitional package, too.

This was a fun bug to investigate and fix, and I am happy that we will

finally have sensible names (and symbol versions!) for both of our

libcurl variants. Stay tuned for the next challenge!