This is a lenghty blog post. It features a long introduction that explains how apt update acquires various files from a package repository, what is Acquire-By-Hash, and how it all works for Kali Linux: a Debian-based distro that doesn't support Acquire-By-Hash, and which is distributed via a network of mirrors and a redirector.

In a second part, I explore some "Hash Sum Mismatch" errors that we can hit with Kali Linux, errors that would not happen if only Acquire-By-Hash was supported. If anything, this blog post supports the case for adding Acquire-By-Hash support in reprepro, as requested at https://bugs.debian.org/820660.

All of this could have just remained some personal notes for myself, but I got carried away and turned it into a blog post, dunno why... Hopefully others will find it interesting, but you really need to like troubleshooting stories, packed with details, and poorly written at that. You've been warned!

Introducing Acquire-By-Hash

Acquire-By-Hash is a feature of APT package repositories, that might or might not be supported by your favorite Debian-based distribution. A repository that supports it says so, in the Release file, by setting the field Acquire-By-Hash: yes.

It's easy to check. Debian and Ubuntu both support it:

$ wget -qO- http://deb.debian.org/debian/dists/sid/Release | grep -i ^Acquire-By-Hash:

Acquire-By-Hash: yes

$ wget -qO- http://archive.ubuntu.com/ubuntu/dists/devel/Release | grep -i ^Acquire-By-Hash:

Acquire-By-Hash: yes

What about other Debian derivatives?

$ wget -qO- http://http.kali.org/kali/dists/kali-rolling/Release | grep -i ^Acquire-By-Hash: || echo not supported

not supported

$ wget -qO- https://archive.raspberrypi.com/debian/dists/trixie/Release | grep -i ^Acquire-By-Hash: || echo not supported

not supported

$ wget -qO- http://packages.linuxmint.com/dists/faye/Release | grep -i ^Acquire-By-Hash: || echo not supported

not supported

$ wget -qO- https://apt.pop-os.org/release/dists/noble/Release | grep -i ^Acquire-By-Hash: || echo not supported

not supported

Huhu, Acquire-By-Hash is not ubiquitous. But wait, what is Acquire-By-Hash to start with? To answer that, we have to take a step back and cover some basics first.

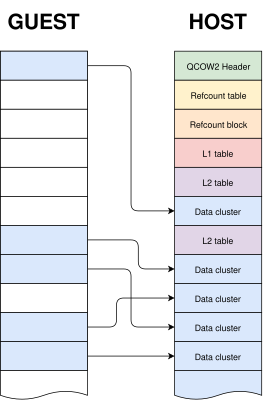

The HTTP requests performed by 'apt update'

What happens when one runs apt update? APT first requests the Release file from the repository(ies) configured in the APT sources. This file is a starting point, it contains a list of other files (sometimes called "Index files") that are available in the repository, along with their hashes. After fetching the Release file, APT proceeds to request those Index files. To give you an idea, there are many kinds of Index files, among which:

Packages files: list the binary packages that are available in the repositorySources files: list the source packages that are available in the repositoryContents files: list files provided by each package (used by the command apt-file)- and even more, such as PDiff, Translations, DEP-11 metadata, etc etc...

There's an excellent Wiki page that details the structure of a Debian package repository, it's there: https://wiki.debian.org/DebianRepository/Format.

Note that APT doesn't necessarily download ALL of those Index files. For simplicity, we'll limit ourselves to the minimal scenario, where apt update downloads only the Packages files.

Let's try to make it more visual: here's a representation of a apt update transaction, assuming that all the components of the repository are enabled:

apt update -> Release -> Packages (main/amd64)

-> Packages (contrib/amd64)

-> Packages (non-free/amd64)

-> Packages (non-free-firmware/amd64)

Meaning that, in a first step, APT downloads the Release file, reads its content, and then in a second step it downloads the Index files in parallel.

You can actually see that happen with a command such as apt -q -o Debug::Acquire::http=true update 2>&1 | grep ^GET. For Kali Linux you'll see something pretty similar to what I described above. Try it!

$ podman run --rm kali-rolling apt -q -o Debug::Acquire::http=true update 2>&1 | grep ^GET

GET /kali/dists/kali-rolling/InRelease HTTP/1.1 # <- returns a redirect, that is why the file is requested twice

GET /kali/dists/kali-rolling/InRelease HTTP/1.1

GET /kali/dists/kali-rolling/non-free/binary-amd64/Packages.gz HTTP/1.1

GET /kali/dists/kali-rolling/main/binary-amd64/Packages.gz HTTP/1.1

GET /kali/dists/kali-rolling/non-free-firmware/binary-amd64/Packages.gz HTTP/1.1

GET /kali/dists/kali-rolling/contrib/binary-amd64/Packages.gz HTTP/1.1

However, and it's now becoming interesting, for Debian or Ubuntu you won't see the same kind of URLs:

$ podman run --rm debian:sid apt -q -o Debug::Acquire::http=true update 2>&1 | grep ^GET

GET /debian/dists/sid/InRelease HTTP/1.1

GET /debian/dists/sid/main/binary-amd64/by-hash/SHA256/22709f0ce67e5e0a33a6e6e64d96a83805903a3376e042c83d64886bb555a9c3 HTTP/1.1

APT doesn't download a file named Packages, instead it fetches a file named after a hash. Why? This is due to the field Acquire-By-Hash: yes that is present in the Debian's Release file.

What does Acquire-By-Hash mean for 'apt update'

The idea with Acquire-By-Hash is that the Index files are named after their hash on the repository, so if the MD5 sum of main/binary-amd64/Packages is 77b2c1539f816832e2d762adb20a2bb1, then the file will be stored at main/binary-amd64/by-hash/MD5Sum/77b2c1539f816832e2d762adb20a2bb1. The path main/binary-amd64/Packages still exists (it's the "Canonical Location" of this particular Index file), but APT won't use it, instead it downloads the file located in the by-hash/ directory.

Why does it matter? This has to do with repository updates, and allowing the package repository to be updated atomically, without interruption of service, and without risk of failure client-side.

It's important to understand that the Release file and the Index files are part of a whole, a set of files that go altogether, given that Index files are validated by their hash (as listed in the Release file) after download by APT.

If those files are simply named "Release" and "Packages", it means they are not immutable: when the repository is updated, all of those files are updated "in place". And it causes problems. A typical failure mode for the client, during a repository update, is that: 1) APT requests the Release file, then 2) the repository is updated and finally 3) APT requests the Packages files, but their checksum don't match, causing apt update to fail. There are variations of this error, but you get the idea: updating a set of files "in place" is problematic.

The Acquire-By-Hash mechanism was introduced exactly to solve this problem: now the Index files have a unique, immutable name. When the repository is updated, at first new Index files are added in the by-hash/ directory, and only after the Release file is updated. Old Index files in by-hash/ are retained for a while, so there's a grace period during which both the old and the new Release files are valid and working: the Index files that they refer to are available in the repo. As a result: no interruption of service, no failure client-side during repository updates.

This is explained in more details at https://www.chiark.greenend.org.uk/~cjwatson/blog/no-more-hash-sum-mismatch-errors.html, which is the blog post from Colin Watson that came out at the time Acquire-By-Hash was introduced in... 2016. This is still an excellent read in 2025.

So you might be wondering why I'm rambling about a problem that's been solved 10 years ago, but then as I've shown in the introduction, the problem is not solved for everyone. Support for Acquire-By-Hash server side is not for granted, and unfortunately it never landed in reprepro, as one can see at https://bugs.debian.org/820660.

reprepro is a popular tool for creating APT package repositories. In particular, at Kali Linux we use reprepro, and that's why there's no Acquire-By-Hash: yes in the Kali Release file. As one can guess, it leads to subtle issues during those moments when the repository is updated. However... we're not ready to talk about that yet! There's still another topic that we need to cover: this window of time during which a repository is being updated, and during which apt update might fail.

The window for Hash Sum Mismatches, and the APT trick that saves the day

Pay attention! In this section, we're now talking about packages repositories that do NOT support Acquire-By-Hash, such as the Kali Linux repository.

As I've said above, it's only when the repository is being updated that there is a "Hash Sum Mismatch Window", ie. a moment when apt update might fail for some unlucky clients, due to invalid Index files.

Surely, it's a very very short window of time, right? I mean, it can't take that long to update files on a server, especially when you know that a repository is usually updated via rsync, and rsync goes to great length to update files the most atomically as it can (with the option --delay=updates). So if apt update fails for me, I've been very unlucky, but I can just retry in a few seconds and it should be fixed, isn't it? The answer is: it's not that simple.

So far I pictured the "package repository" as a single server, for simplicity. But it's not always what it is. For Kali Linux, by default users have http.kali.org configured in their APT sources, and it is a redirector, ie. a web server that redirects requests to mirrors that are nearby the client. Some context that matters for what comes next: the Kali repository is synced with ~70 mirrors all around the world, 4 times a day. What happens if your apt update requests are redirected to 2 mirrors close-by, and one was just synced, while the other is still syncing (or even worse, failed to sync entirely)? You'll get a mix of old and new Index files. Hash Sum Mismatch!

As you can see, with this setup the "Hash Sum Mismatch Window" becomes much longer than a few seconds: as long as nearby mirrors are syncing the window is opened. You could have a fast and a slow mirror next to you, and they can be out of sync with each other for several minutes every time the repository is updated, for example.

For Kali Linux in particular, there's a "detail" in our network of mirrors that, as a side-effect, almost guarantees that this window lasts several minutes at least. This is because the pool of mirrors includes kali.download which is in fact the Cloudflare CDN, and from the redirector point of view, it's seen as a "super mirror" that is present in every country. So when APT fires a bunch of requests against http.kali.org, it's likely that some of them will be redirected to the Kali CDN, and others will be redirected to a mirror nearby you. So far so good, but there's another point of detail to be aware of: the Kali CDN is synced first, before the other mirrors. Another thing: usually the mirrors that are the farthest from the Tier-0 mirror are the longest to sync. Packing all of that together: if you live somewhere in Asia, it's not uncommon for your "Hash Sum Mismatch Window" to be as long as 30 minutes, between the moment the Kali CDN is synced, and the moment your nearby mirrors catch up and are finally in sync as well.

Having said all of that, and assuming you're still reading (anyone here?), you might be wondering... Does that mean that apt update is broken 4 times a day, for around 30 minutes, for every Kali user out there? How can they bear with that? Answer is: no, of course not, it's not broken like that. It works despite all of that, and this is thanks to yet another detail that we didn't go into yet. This detail lies in APT itself.

APT is in fact "redirector aware", in a sense. When it fetches a Release file, and if ever the request is redirected, it then fires the subsequent requests against the server where it was initially redirected. So you are guaranteed that the Release file and the Index files are retrieved from the same mirror! Which brings back our "Hash Sum Mismatch Window" to the window for a single server, ie. something like a few seconds at worst, hopefully. And that's what makes it work for Kali, literally. Without this trick, everything would fall apart.

For reference, this feature was implemented in APT back in... 2016! A busy year it seems! Here's the link to the commit: use the same redirection mirror for all index files.

To finish, a dump from the console. You can see this behaviour play out easily, again with APT debugging turned on. Below we can see that only the first request hits the Kali redirector:

$ podman run --rm kali-rolling apt -q -o Debug::Acquire::http=true update 2>&1 | grep -e ^Answer -e ^HTTP

Answer for: http://http.kali.org/kali/dists/kali-rolling/InRelease

HTTP/1.1 302 Found

Answer for: http://mirror.freedif.org/kali/dists/kali-rolling/InRelease

HTTP/1.1 200 OK

Answer for: http://mirror.freedif.org/kali/dists/kali-rolling/non-free-firmware/binary-amd64/Packages.gz

HTTP/1.1 200 OK

Answer for: http://mirror.freedif.org/kali/dists/kali-rolling/contrib/binary-amd64/Packages.gz

HTTP/1.1 200 OK

Answer for: http://mirror.freedif.org/kali/dists/kali-rolling/main/binary-amd64/Packages.gz

HTTP/1.1 200 OK

Answer for: http://mirror.freedif.org/kali/dists/kali-rolling/non-free/binary-amd64/Packages.gz

HTTP/1.1 200 OK

Interlude

Believe it or not, we're done with the introduction! At this point, we have a good understanding of what apt update does (in terms of HTTP requests), we know that Release files and Index files are part of a whole, and we know that a repository can be updated atomically thanks to the Acquire-By-Hash feature, so that users don't experience interruption of service or failures of any sort, even with a rolling repository that is updated several times a day, like Debian sid.

We've also learnt that, despite the fact that Acquire-By-Hash landed almost 10 years ago, some distributions like Kali Linux are still doing without it... and yet it works! But the reason why it works is more complicated to grasp, especially when you add a network of mirrors and a redirector to the picture. Moreover, it doesn't work as flawlessly as with the Acquire-By-Hash feature: we still expect some short (seconds at worst) "Hash Sum Mismatch Windows" for those unlucky users that run apt update at the wrong moment.

This was a long intro, but that really sets the stage for what comes next: the edge cases. Some situations in which we can hit some Hash Sum Mismatch errors with Kali. Error cases that I've collected and investigated over the time...

If anything, it supports the case that Acquire-By-Hash is really something that should be implemented in reprepro. More on that in the conclusion, but for now, let's look at those edge cases.

Edge Case 1: the caching proxy

If you put a caching proxy (such as approx, my APT caching proxy of choice) between yourself and the actual packages repository, then obviously it's the caching proxy that performs the HTTP requests, and therefore APT will never know about the redirections returned by the server, if any. So the APT trick of downloading all the Index files from the same server in case of redirect doesn't work anymore.

It was rather easy to confirm that by building a Kali package during a mirror sync, and watch if fail at the "Update chroot" step:

$ sudo rm /var/cache/approx/kali/dists/ -fr

$ gbp buildpackage --git-builder=sbuild

+------------------------------------------------------------------------------+

| Update chroot Wed, 11 Jun 2025 10:33:32 +0000 |

+------------------------------------------------------------------------------+

Get:1 http://http.kali.org/kali kali-dev InRelease [41.4 kB]

Get:2 http://http.kali.org/kali kali-dev/contrib Sources [81.6 kB]

Get:3 http://http.kali.org/kali kali-dev/main Sources [17.3 MB]

Get:4 http://http.kali.org/kali kali-dev/non-free Sources [122 kB]

Get:5 http://http.kali.org/kali kali-dev/non-free-firmware Sources [8297 B]

Get:6 http://http.kali.org/kali kali-dev/non-free amd64 Packages [197 kB]

Get:7 http://http.kali.org/kali kali-dev/non-free-firmware amd64 Packages [10.6 kB]

Get:8 http://http.kali.org/kali kali-dev/contrib amd64 Packages [120 kB]

Get:9 http://http.kali.org/kali kali-dev/main amd64 Packages [21.0 MB]

Err:9 http://http.kali.org/kali kali-dev/main amd64 Packages

File has unexpected size (20984689 != 20984861). Mirror sync in progress? [IP: ::1 9999]

Hashes of expected file:

- Filesize:20984861 [weak]

- SHA256:6cbbee5838849ffb24a800bdcd1477e2f4adf5838a844f3838b8b66b7493879e

- SHA1:a5c7e557a506013bd0cf938ab575fc084ed57dba [weak]

- MD5Sum:1433ce57419414ffb348fca14ca1b00f [weak]

Release file created at: Wed, 11 Jun 2025 07:15:10 +0000

Fetched 17.9 MB in 9s (1893 kB/s)

Reading package lists...

E: Failed to fetch http://http.kali.org/kali/dists/kali-dev/main/binary-amd64/Packages.gz File has unexpected size (20984689 != 20984861). Mirror sync in progress? [IP: ::1 9999]

Hashes of expected file:

- Filesize:20984861 [weak]

- SHA256:6cbbee5838849ffb24a800bdcd1477e2f4adf5838a844f3838b8b66b7493879e

- SHA1:a5c7e557a506013bd0cf938ab575fc084ed57dba [weak]

- MD5Sum:1433ce57419414ffb348fca14ca1b00f [weak]

Release file created at: Wed, 11 Jun 2025 07:15:10 +0000

E: Some index files failed to download. They have been ignored, or old ones used instead.

E: apt-get update failed

The obvious workaround is to NOT use the redirector in the approx configuration. Either use a mirror close by, or the Kali CDN:

$ grep kali /etc/approx/approx.conf

#kali http://http.kali.org/kali <- do not use the redirector!

kali http://kali.download/kali

Edge Case 2: debootstrap struggles

What if one tries to debootstrap Kali while mirrors are being synced? It can give you some ugly logs, but it might not be fatal:

$ sudo debootstrap kali-dev kali-dev http://http.kali.org/kali

[...]

I: Target architecture can be executed

I: Retrieving InRelease

I: Checking Release signature

I: Valid Release signature (key id 827C8569F2518CC677FECA1AED65462EC8D5E4C5)

I: Retrieving Packages

I: Validating Packages

W: Retrying failed download of http://http.kali.org/kali/dists/kali-dev/main/binary-amd64/Packages.gz

I: Retrieving Packages

I: Validating Packages

W: Retrying failed download of http://http.kali.org/kali/dists/kali-dev/main/binary-amd64/Packages.gz

I: Retrieving Packages

I: Validating Packages

W: Retrying failed download of http://http.kali.org/kali/dists/kali-dev/main/binary-amd64/Packages.gz

I: Retrieving Packages

I: Validating Packages

W: Retrying failed download of http://http.kali.org/kali/dists/kali-dev/main/binary-amd64/Packages.gz

I: Retrieving Packages

I: Validating Packages

I: Resolving dependencies of required packages...

I: Resolving dependencies of base packages...

I: Checking component main on http://http.kali.org/kali...

I: Retrieving adduser 3.152

[...]

To understand this one, we have to go and look at the debootstrap source code. How does debootstrap fetch the Release file and the Index files? It uses wget, and it retries up to 10 times in case of failure. It's not as sophisticated as APT: it doesn't detect when the Release file is served via a redirect.

As a consequence, what happens above can be explained as such:

- debootstrap requests the Release file, gets redirected to a mirror, and retrieves it from there

- then it requests the Packages file, gets redirected to another mirror that is not in sync with the first one, and retrieves it from there

- validation fails, since the checksum is not as expected

- try again and again

Since debootstrap retries up to 10 times, at some point it's lucky enough to get redirected to the same mirror as the one from where it got its Release file from, and this time it gets the right Packages file, with the expected checksum. So ultimately it succeeds.

Edge Case 3: post-debootstrap failure

I like this one, because it gets us to yet another detail that we didn't talk about yet.

So, what happens after we successfully debootstraped Kali? We have only the main component enabled, and only the Index file for this component have been retrieved. It looks like that:

$ sudo debootstrap kali-dev kali-dev http://http.kali.org/kali

[...]

I: Base system installed successfully.

$ cat kali-dev/etc/apt/sources.list

deb http://http.kali.org/kali kali-dev main

$ ls -l kali-dev/var/lib/apt/lists/

total 80468

-rw-r--r-- 1 root root 41445 Jun 19 07:02 http.kali.org_kali_dists_kali-dev_InRelease

-rw-r--r-- 1 root root 82299122 Jun 19 07:01 http.kali.org_kali_dists_kali-dev_main_binary-amd64_Packages

-rw-r--r-- 1 root root 40562 Jun 19 11:54 http.kali.org_kali_dists_kali-dev_Release

-rw-r--r-- 1 root root 833 Jun 19 11:54 http.kali.org_kali_dists_kali-dev_Release.gpg

drwxr-xr-x 2 root root 4096 Jun 19 11:54 partial

So far so good. Next step would be to complete the sources.list with other components, then run apt update: APT will download the missing Index files. But if you're unlucky, that might fail:

$ sudo sed -i 's/main$/main contrib non-free non-free-firmware/' kali-dev/etc/apt/sources.list

$ cat kali-dev/etc/apt/sources.list

deb http://http.kali.org/kali kali-dev main contrib non-free non-free-firmware

$ sudo chroot kali-dev apt update

Hit:1 http://http.kali.org/kali kali-dev InRelease

Get:2 http://kali.download/kali kali-dev/contrib amd64 Packages [121 kB]

Get:4 http://mirror.sg.gs/kali kali-dev/non-free-firmware amd64 Packages [10.6 kB]

Get:3 http://mirror.freedif.org/kali kali-dev/non-free amd64 Packages [198 kB]

Err:3 http://mirror.freedif.org/kali kali-dev/non-free amd64 Packages

File has unexpected size (10442 != 10584). Mirror sync in progress? [IP: 66.96.199.63 80]

Hashes of expected file:

- Filesize:10584 [weak]

- SHA256:71a83d895f3488d8ebf63ccd3216923a7196f06f088461f8770cee3645376abb

- SHA1:c4ff126b151f5150d6a8464bc6ed3c768627a197 [weak]

- MD5Sum:a49f46a85febb275346c51ba0aa8c110 [weak]

Release file created at: Fri, 23 May 2025 06:48:41 +0000

Fetched 336 kB in 4s (77.5 kB/s)

Reading package lists... Done

E: Failed to fetch http://mirror.freedif.org/kali/dists/kali-dev/non-free/binary-amd64/Packages.gz File has unexpected size (10442 != 10584). Mirror sync in progress? [IP: 66.96.199.63 80]

Hashes of expected file:

- Filesize:10584 [weak]

- SHA256:71a83d895f3488d8ebf63ccd3216923a7196f06f088461f8770cee3645376abb

- SHA1:c4ff126b151f5150d6a8464bc6ed3c768627a197 [weak]

- MD5Sum:a49f46a85febb275346c51ba0aa8c110 [weak]

Release file created at: Fri, 23 May 2025 06:48:41 +0000

E: Some index files failed to download. They have been ignored, or old ones used instead.

What happened here? Again, we need APT debugging options to have a hint:

$ sudo chroot kali-dev apt -q -o Debug::Acquire::http=true update 2>&1 | grep -e ^Answer -e ^HTTP

Answer for: http://http.kali.org/kali/dists/kali-dev/InRelease

HTTP/1.1 304 Not Modified

Answer for: http://http.kali.org/kali/dists/kali-dev/contrib/binary-amd64/Packages.gz

HTTP/1.1 302 Found

Answer for: http://http.kali.org/kali/dists/kali-dev/non-free/binary-amd64/Packages.gz

HTTP/1.1 302 Found

Answer for: http://http.kali.org/kali/dists/kali-dev/non-free-firmware/binary-amd64/Packages.gz

HTTP/1.1 302 Found

Answer for: http://kali.download/kali/dists/kali-dev/contrib/binary-amd64/Packages.gz

HTTP/1.1 200 OK

Answer for: http://mirror.sg.gs/kali/dists/kali-dev/non-free-firmware/binary-amd64/Packages.gz

HTTP/1.1 200 OK

Answer for: http://mirror.freedif.org/kali/dists/kali-dev/non-free/binary-amd64/Packages.gz

HTTP/1.1 200 OK

As we can see above, for the Release file we get a 304 (aka. "Not Modified") from the redirector. Why is that?

This is due to If-Modified-Since also known as RFC-7232. APT supports this feature when it retrieves the Release file, it basically says to the server "Give me the Release file, but only if it's newer than what I already have". If the file on the server is not newer than that, it answers with a 304, which basically says to the client "You have the latest version already". So APT doesn't get a new Release file, it uses the Release file that is already present locally in /var/lib/apt/lists/, and then it proceeeds to download the missing Index files. And as we can see above: it then hits the redirector for each requests, and might be redirected to different mirrors for each Index file.

So the important bit here is: the APT "trick" of downloading all the Index files from the same mirror only works if the Release file is served via a redirect. If it's not, like in this case, then APT hits the redirector for each files it needs to download, and it's subject to the "Hash Sum Mismatch" error again.

In practice, for the casual user running apt update every now and then, it's not an issue. If they have the latest Release file, no extra requests are done, because they also have the latest Index files, from a previous apt update transaction. So APT doesn't re-download those Index files. The only reason why they'd have the latest Release file, and would miss some Index files, would be that they added new components to their APT sources, like we just did above. Not so common, and then they'd need to run apt update at a unlucky moment. I don't think many users are affected in practice.

Note that this issue is rather new for Kali Linux. The redirector running on http.kali.org is mirrorbits, and support for If-Modified-Since just landed in the latest release, version 0.6. This feature was added by no one else than me, a great example of the expression "shooting oneself in the foot".

An obvious workaround here is to empty /var/lib/apt/lists/ in the chroot after debootstrap completed. Or we could disable support for If-Modified-Since entirely for Kali's instance of mirrorbits.

Summary and Conclusion

The Hash Sum Mismatch failures above are caused by a combination of things:

- Kali uses a redirector + a network of mirrors

- Kali repo doesn't support Acquire-By-Hash

- The fact that the redirector honors If-Modified-Since makes the matter a bit worse

At the same time:

- Regular users that just use APT to update their system or install packages are not affected by those issues

- Power users (that setup a caching proxy) or developers (that use debootstrap) are the most likely to hit those issues every now and then

- It only happens during those specific windows of time, when mirrors might not be in sync with each others, 4 times a day

- It's rather easy to workaround on the user side, by NOT using the redirector

- However, unless you're deep into these things, you're unlikely to understand what caused the issues, and to magically guess the workarounds

All in all, it seems that all those issues would go away if only Acquire-By-Hash was supported in the Kali packages repository.

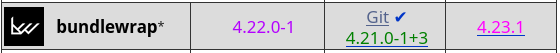

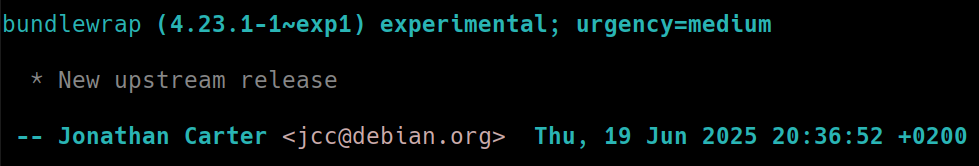

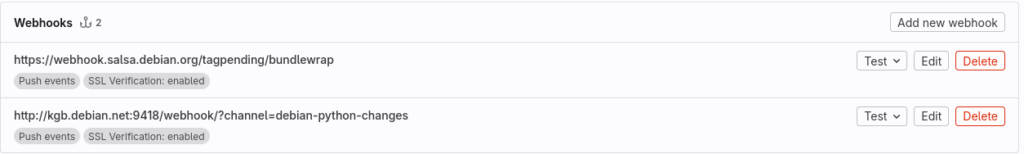

Now is not a bad moment to try to land this feature in reprepro. After development halted in 2019, there's now a new upstream, and patches are being merged again. But it won't be easy: reprepro is a C codebase of around 50k lines of code, and it will take time and effort for the newcomer to get acquainted with the codebase, to the point of being able to implement a significant feature like this one.

As an alternative, aptly is another popular tool to manage APT package repositories. And it seems to support Acquire-By-Hash already.

Another alternative: I was told that debusine has (experimental) support for package repositories, and that Acquire-By-Hash is supported as well.

Options are on the table, and I hope that Kali will eventually get support for Acquire-By-Hash, one way or another.

To finish, due credits: this blog post exists thanks to my employer OffSec.

Thanks for reading!